Doc:FEM Learning:Finite Element Method

The finite element method (FEM) is used for finding approximate solutions of partial differential equations (PDE) as well as of integral equations such as the heat transport equation. The solution approach is based either on eliminating the differential equation completely (steady state problems), or rendering the PDE into an approximating system of ordinary differential equations, which are then solved using standard techniques such as finite differences, Runge-Kutta, etc.

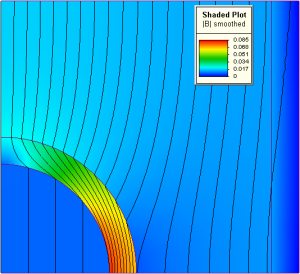

In solving partial differential equations, the primary challenge is to create an equation that approximates the equation to be studied, but is numerically stable, meaning that errors in the input data and intermediate calculations do not accumulate and cause the resulting output to be meaningless. There are many ways of doing this, all with advantages and disadvantages. The Finite Element Method is a good choice for solving partial differential equations over complex domains (like cars and oil pipelines), when the domain changes (as during a solid state reaction with a moving boundary), when the desired precision varies over the entire domain, or when the solution lacks smoothness. For instance, in simulating the weather pattern on Earth, it is more important to have accurate predictions over land than over the wide-open sea, a demand that is achievable using the finite element method.

Contents

History

The finite-element method originated from the needs for solving complex elasticity, structural analysis problems in civil engineering and aeronautical engineering. Its development can be traced back to the work by Alexander Hrennikoff (1941) and Richard Courant (1942). While the approaches used by these pioneers are dramatically different, they share one essential characteristic: mesh discretization of a continuous domain into a set of discrete sub-domains. Hrennikoff's work discretizes the domain by using a lattice analogy while Courant's approach divides the domain into finite triangular subregions for solution of second order elliptic partial differential equations (PDEs) that arise from the problem of torsion of a cylinder. Courant's contribution was evolutionary, drawing on a large body of earlier results for PDEs developed by Rayleigh, Ritz, and Galerkin. Development of the finite element method began in earnest in the middle to late 1950s for airframe and structural analysis and gathered momentum at the University of Stuttgart through the work of John Argyris and at Berkeley through the work of Ray W. Clough in the 1960s for use in civil engineering.[1] The method was provided with a rigorous mathematical foundation in 1973 with the publication of Strang and Fix's An Analysis of The Finite Element Method, and has since been generalized into a branch of applied mathematics for numerical modeling of physical systems in a wide variety of engineering disciplines, e.g., electromagnetism and fluid dynamics.

The development of the finite element method in structural mechanics is often based on an energy principle, e.g., the virtual work principle or the minimum total potential energy principle, which provides a general, intuitive and physical basis that has a great appeal to structural engineers.

Technical discussion

We will illustrate the finite element method using two sample problems from which the general method can be extrapolated. It is assumed that the reader is familiar with calculus and linear algebra.

P1 is a one-dimensional problem

where <math> f </math> is given, <math>u</math> is an unknown function of <math>x</math>, and <math>u</math> is the second derivative of <math>u</math> with respect to <math>x</math>.

The two-dimensional sample problem is the Dirichlet problem

where <math>\Omega </math> is a connected open region in the <math>(x,y) </math> plane whose boundary <math>\partial \Omega</math> is "nice" (e.g., a smooth manifold or a polygon), and <math>u_{xx}</math> and <math>u_{yy} </math> denote the second derivatives with respect to <math>x</math> and <math>y</math>, respectively.

The problem P1 can be solved "directly" by computing antiderivatives. However, this method of solving the boundary value problem works only when there is only one spatial dimension and does not generalize to higher-dimensional problems or to problems like <math>u + u = f</math>. For this reason, we will develop the finite element method for P1 and outline its generalization to P2.

Our explanation will proceed in two steps, which mirror two essential steps one must take to solve a boundary value problem (BVP) using the FEM. In the first step, one rephrases the original BVP in its weak, or variational form. Little to no computation is usually required for this step, the transformation is done by hand on paper. The second step is the discretization, where the weak form is discretized in a finite dimensional space. After this second step, we have concrete formulae for a large but finite dimensional linear problem whose solution will approximately solve the original BVP. This finite dimensional problem is then implemented on a computer.

Variational formulation

The first step is to convert P1 and P2 into their variational equivalents. If <math>u</math> solves P1, then for any smooth function <math>v</math> that satisfies the displacement boundary conditions, i.e. <math>v = 0</math> at <math>x = 0</math> and <math>x = 1</math>,we have

(1)

Conversely, if for a given , (1) holds for every smooth function

then one may show that this

will solve P1. (The proof is nontrivial and uses Sobolev spaces.)

By using integration by parts on the right-hand-side of (1), we obtain

(2)

where we have used the assumption that .

A proof outline of existence and uniqueness of the solution

We can define to be the absolutely continuous functions of

that are

at

and

. Such function are "once differentiable" and it turns out that the symmetric bilinear map

then defines an inner product which turns

into a Hilbert space (a detailed proof is nontrivial.) On the other hand, the left-hand-side

is also an inner product, this time on the Lp space

. An application of the Riesz representation theorem for Hilbert spaces shows that there is a unique

solving (2) and therefore P1.

The variational form of P2

If we integrate by parts using a form of Green's theorem, we see that if u solves P2, then for any v,

where <math>\nabla</math> denotes the gradient and <math>\cdot</math> denotes the dot product in the two-dimensional plane. Once more <math>\,\!\phi</math> can be turned into an inner product on a suitable space <math>H_0^1(\Omega)</math> of "once differentiable" functions of <math>\Omega</math> that are zero on <math>\partial \Omega</math>. We have also assumed that <math>v \in H_0^1(\Omega)</math>. The space <math>H_0^1(\Omega)</math> can no longer be defined in terms of absolutely continuous functions, but see Sobolev spaces. Existence and uniqueness of the solution can also be shown.

Discretization

The basic idea is to replace the infinite dimensional linear problem:

Find such that

;

with a finite dimensional version:

(3)Find such that

;

where V is a finite dimensional subspace of . There are many possible choices for V (one possibility leads to the spectral method). However, for the finite element method we take V to be a space of piecewise linear functions.

For problem P1, we take the interval (0,1), choose values 0 =

<

< ... <

<

= 1 and we define V by

where we define and

. Observe that functions in V are not differentiable according to the elementary definition of calculus. Indeed, if

then the derivative is typically not defined at any

. However, the derivative exists at every other value of x and one can use this derivative for the purpose of integration by parts.

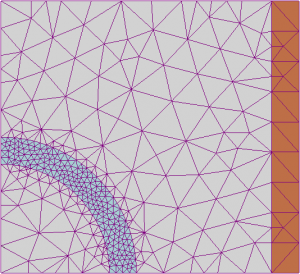

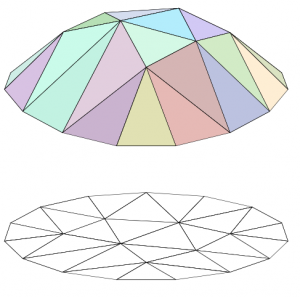

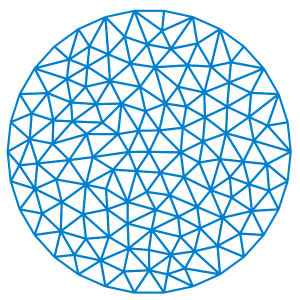

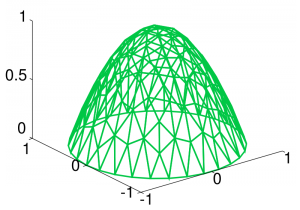

For problem P2, we need V to be a set of functions of Ω. In the figure on the right, we have illustrated a triangulation of a 15 sided polygonal region Ω in the plane (below), and a piecewise linear function (above, in color) of this polygon which is linear on each triangle of the triangulation; the space V would consist of functions that are linear on each triangle of the chosen triangulation.

One often reads instead of V in the literature. The reason is that one hopes that as the underlying triangular grid becomes finer and finer, the solution of the discrete problem (3) will in some sense converge to the solution of the original boundary value problem P2. The triangulation is then indexed by a real valued parameter h > 0 which one takes to be very small. This parameter will be related to the size of the largest or average triangle in the triangulation. As we refine the triangulation, the space of piecewise linear functions V must also change with h, hence the notation

. Since we do not perform such an analysis, we will not use this notation.

Choosing a basis

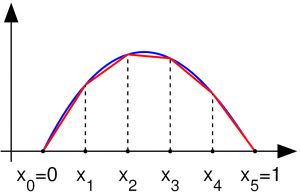

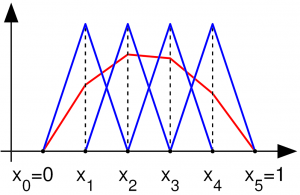

To complete the discretization, we must select a basis of V. In the one-dimensional case, for each control point we will choose the piecewise linear function

whose value is 1 at

and zero at every

, i.e.,

for k = 1,...,n; this basis is a shifted and scaled tent function. For the two-dimensional case, we choose again one basis function per vertex

of the triangulation of the planar region Ω. The function

is the unique function of V whose value is 1 at

and zero at every

.

Depending on the author, the word "element" in "finite element method" refers either to the triangles in the domain, the piecewise linear basis function, or both. So for instance, an author interested in curved domains might replace the triangles with curved primitives, in which case he might describe his elements as being curvilinear. On the other hand, some authors replace "piecewise linear" by "piecewise quadratic" or even "piecewise polynomial". The author might then say "higher order element" instead of "higher degree polynomial." Finite element method is not restricted to triangles (or tetrahedra in 3-D, or higher order simplexes in multidimensional spaces), but can be defined on quadrilateral sub-domains (hexahedra, prisms, or pyramids in 3-D, and so on). Higher order shapes (curvilinear elements) can be defined with polynomial and even non-polynomial shapes (e.g. ellipse or circle).

Methods that use higher degree piecewise polynomial basis functions are often called spectral element methods, especially if the degree of the polynomials increases as the triangulation size h goes to zero.

More advanced implementations (adaptive finite element methods) utilize a method to assess the quality of the results (based on error estimation theory) and modify the mesh during the solution aiming to achieve approximate solution within some bounds from the 'exact' solution of the continuum problem. Mesh adaptivity may utilize various techniques, the most popular are:

- moving nodes (r-adaptivity)

- refining (and un-refining) elements (h-adaptivity)

- changing order of base functions (p-adaptivity)

- combinations of the above (e.g. hp-adaptivity)

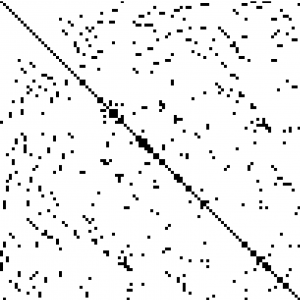

Small support of the basis

The primary advantage of this choice of basis is that the inner products

and

will be zero for almost all j,k. In the one dimensional case, the support of <math>v_k</math> is the interval . Hence, the integrands of <math>\langle v_j,v_k \rangle</math> and <math>Φ(vj,vk)</math> are identically zero whenever

.

Similarly, in the planar case, if <math>x_j</math> and <math>x_k</math> do not share an edge of the triangulation, then the integrals

and

are both zero.

Matrix form of the problem

If we write <math>u(x)=\sum_{k=1}^n u_k v_k(x)</math> and <math>f(x)=\sum_{k=1}^n f_k v_k(x)</math> then problem (3) becomes

(4)

If we denote by <math>\mathbf{u}</math> and <math>\mathbf{f}</math> the column vectors <math>(u_1,...,u_n)t</math> and <math>(f_1,...,f_n)t</math>, and if let <math>L = (L_{ij})</math> and <math>M = (M_{ij})</math> be matrices whose entries are <math>L_{ij} = φ(v_i,v_j)</math> and <math>M_{ij}=\int v_i v_j</math> then we may rephrase (4) as

(5)

As we have discussed before, most of the entries of <math>L</math> and <math>M</math> are zero because the basis functions <math>v_k</math> have small support. So we now have to solve a linear system in the unknown <math>\mathbf{u}</math> where most of the entries of the matrix <math>L</math>, which we need to invert, are zero.

Such matrices are known as sparse matrices, and there are efficient solvers for such problems (much more efficient than actually inverting the matrix.) In addition, <math>L</math> is symmetric and positive definite, so a technique such as the conjugate gradient method is favored. For problems that are not too large, sparse LU decompositions and Cholesky decompositions still work well. For instance, Matlab's backslash operator (which is based on sparse LU) can be sufficient for meshes with a hundred thousand vertices.

The matrix <math>L</math> is usually referred to as the stiffness matrix, while the matrix <math>M</math> is dubbed the mass matrix. Compare this to the simplistic case of a single spring governed by the equation is Kx = f, where K is the stiffness, x (or u) is the displacement and f is force.

General form of the finite element method

In general, the finite element method is characterized by the following process.

- One chooses a grid for <math>\Omega</math>. In the preceding treatment, the grid consisted of triangles, but one can also use squares or curvilinear polygons.

- Then, one chooses basis functions. In our discussion, we used piecewise linear basis functions, but it is also common to use piecewise polynomial basis functions.

A separate consideration is the smoothness of the basis functions. For second order elliptic boundary value problems, piecewise polynomial basis function that are merely continuous suffice (i.e., the derivatives are discontinuous.) For higher order partial differential equations, one must use smoother basis functions. For instance, for a fourth order problem such as <math>u_{xxxx} + u_{yyyy} = f</math>, one may use piecewise quadratic basis functions that are C1.

Typically, one has an algorithm for taking a given mesh and subdividing it. If the main method for increasing precision is to subdivide the mesh, one has an h-method (h is customarily the diameter of the largest element in the mesh.) In this manner, if one shows that the error with a grid h is bounded above by Chp, for some <math>C<\infty</math> and <math>p > 0</math>, then one has an order p method. Under certain hypotheses (for instance, if the domain is convex), a piecewise polynomial of order d method will have an error of order p = d + 1.

If instead of making h smaller, one increases the degree of the polynomials used in the basis function, one has a p-method. If one simultaneously makes h smaller while making p larger, one has an hp-method. High order method (with large p) are called spectral element methods, which are not to be confused with spectral methods.

For vector partial differential equations, the basis functions may take values in <math>\mathbb{R}^n</math>.

Comparison to the finite difference method

The finite difference method (FDM) is an alternative way for solving PDEs. The differences between FEM and FDM are:

- The finite difference method is an approximation to the differential equation; the finite element method is an approximation to its solution.

- The most attractive feature of the FEM is its ability to handle complex geometries (and boundaries) with relative ease. While FDM in its basic form is restricted to handle rectangular shapes and simple alterations thereof, the handling of geometries in FEM is theoretically straightforward.

- The most attractive feature of finite differences is that it can be very easy to implement.

- There are several ways one could consider the FDM a special case of the FEM approach. One might choose basis functions as either piecewise constant functions or Dirac delta functions. In both approaches, the approximations are defined on the entire domain, but need not be continuous. Alternatively, one might define the function on a discrete domain, with the result that the continuous differential operator no longer makes sense, however this approach is not FEM.

- There are reasons to consider the mathematical foundation of the finite element approximation more sound, for instance, because the quality of the approximation between grid points is poor in FDM.

- The quality of a FEM approximation is often higher than in the corresponding FDM approach, but this is extremely problem dependent and several examples to the contrary can be provided.

Generally, FEM is the method of choice in all types of analysis in structural mechanics (i.e. solving for deformation and stresses in solid bodies or dynamics of structures) while computational fluid dynamics (CFD) tends to use FDM or other methods (e.g., finite volume method). CFD problems usually require discretization of the problem into a large number of cells/gridpoints (millions and more), therefore cost of the solution favors simpler, lower order approximation within each cell. This is especially true for 'external flow' problems, like air flow around the car or airplane, or weather simulation in a large area.

There are many finite element software packages, some free and some proprietary.